If you’ve paid for a Gen AI chatbot, such as OpenAI’s ChatGPT Plus/Teams or Gemini, you may be in for a disappointment when sharing a custom creation with others. Imagine spending a lot of time designing a custom GPT, only to find that when you share it with free users, it doesn’t work? Or crafting a Google Gem, but the best features are unavailable to free users?

Over the last year, I’ve created many ChatGPT custom GPTs, Gemini Gems, or BoodleBox Bots. Always, the usage is limited, especially for the educators I created it for.

Why can’t free users get the same benefits of ChatGPT Plus users when relying on Custom GPTs that leverage PDFs, CSVs, and other files in the knowledge bank that may require back-end coding (e.g. Python)?

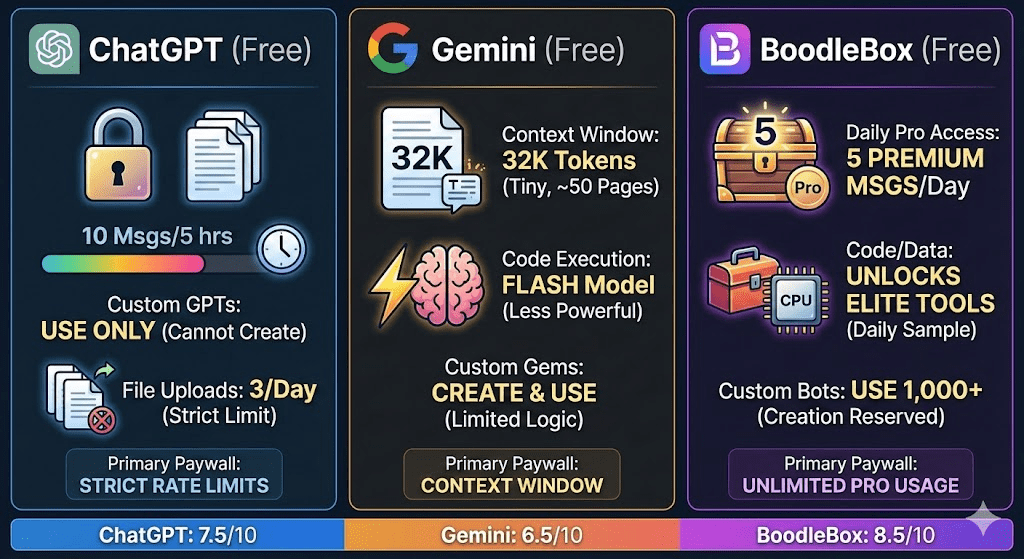

Comparison chart

I asked each of the AI Chatbots their opinion on their own services. Then, I compiled relied on Gemini to compile them into a chart and score them. This is what resulted.

| Feature | ChatGPT (Free) | Gemini (Free) | BoodleBox (Free) |

| Custom Bot Access | Use GPTs from Store; cannot create custom GPTs. | Create and use custom Gems; daily limits apply. | Use 1,000+ bots; creation reserved for paid tier. |

| File Processing | 3 uploads per day; $512\text{MB}$ per file limit. | $32\text{K}$ token context window (~50 pages). | Knowledge Bank allowed; depth expands on paid plans. |

| Code/Data Execution | Data analysis available; tied to $10$ msg / $5$ hr limit. | Powered by Gemini Flash; “Fast” model fallback. | 5 Daily Pro messages to access premium tools/models. |

| Primary Paywall | Rate Limits: $10$ msgs (Free) vs. $160$ msgs (Plus). | Context Window: $32\text{K}$ (Free) vs. $1\text{M}+$ (Pro/Ultra). | Unlimited Pro Usage and Custom Bot creation. |

| Score (1-10) | 7.5 | 6.5 | 8.5 |

My Take

Before I share the detailed info the chart is based on, I’m going to come out and say that Gemini 3 Pro with NotebookLM whips the pants off ChatGPT Projects and Custom GPT. I’ve made enough of both to see that Gemini is clearly hands down the winner. However, neither ChatGPT or Gemini do all that well for users. And, BoodleBox Bots work well for free users using “older” models. This works well because even the older models BoodleBox free accounts rely on are getting better and better.

As I try to figure out who to STOP paying money to, I’m probably going to say that Gemini plus NotebookLM is the hands-down winner for paid users. BoodleBox Unlimited is fantastic for providing access to a variety of accounts, but the question that Google makes all so evident is, “Does BoodleBox have the smartphone app, the ecosystem that a powerful Gen AI tool needs?” If privacy is not a concern, then Gemini Pro for consumers is the way to go. If BoodleBox’ security features for consumers is important, then it takes the lead. I’m not sure where this leaves ChatGPT, except out in the cold.

I didn’t include MS Copilot or Claude since I don’t have access to either. With Claude, having paid for it and run into usage limits, I’m not really interested. Until those disappear (unlikely), I’m probably going to skip it.

All this is subject to change due to improvements to models, my increasing understanding of how models work, and I’m going to say right now that I’m not AI engineer. These are my opinions as a daily user.

ChatGPT Free

Wondering what the limits are? Here’s what Gemini had to say about ChatGPT when I asked:

Free users can use GPTs (including ones that reference uploaded files), but they don’t get the same reliability or capacity as paid tiers because the “benefits” you’re describing are mostly resource- and risk-intensive backend features that OpenAI gates with rate limits and plan entitlements.

What’s actually different for Free users (and why it matters for Custom GPTs)

- Tighter rate limits on tool-heavy features (including “Python/data analysis”)

- On the Free tier, advanced functionality in GPTs (data analysis, file uploads, search, image generation) is available but has stricter rate limits, and those limits are shared between normal ChatGPT use and GPT use. (OpenAI Help Center)

- Practically: if your Custom GPT depends on “data analysis” (the hosted code sandbox) to reliably process PDFs/CSVs, Free users will hit limits sooner and get blocked until reset.

- Much lower file upload quotas

- Free users are limited to 3 file uploads per day, while higher tiers have substantially higher upload throughput (and OpenAI can reduce limits during peak hours). (OpenAI Help Center)

- Practically: a knowledge-bank workflow (PDF/CSV iteration, multiple uploads, retries) is hard to sustain on Free.

- Custom GPT creation (the Builder) is a paid entitlement

- Free users can use GPTs from the GPT Store (capacity permitting), but creating GPTs is limited to paid plans. (OpenAI Help Center)

- Practically: if your goal is “everyone gets the same benefits,” you’re constrained by the platform: Free can consume (with limits), paid can build + consume with higher limits.

- Capacity management and performance differences

- Free-tier access to GPTs is “as capacity permits,” and OpenAI notes the experience may be “less performant” for Free users. (OpenAI Help Center)

- Practically: even if the GPT is shareable, Free users may see variability in responsiveness/availability.

- Paid tiers subsidize higher compute, longer context/memory, and premium tools

- OpenAI explicitly positions paid plans as offering higher limits and (for Plus) deeper reasoning workloads and expanded access to advanced models, with higher limits for messages/uploads/memory/context. (OpenAI)

- This is the core economic reason: running hosted “analysis” (secure execution), large-file ingestion, and long-context retrieval costs more than plain chat.

The practical takeaway ✅

If a Custom GPT depends on: (a) multiple file uploads, (b) large PDFs/CSVs, and/or (c) “data analysis/Python” to function, then Free users will predictably experience quota friction (uploads/day), tool lockouts (rate limits), and capacity variability.

Ways to design around this (if you’re distributing to Free users) 🧩

- Minimize or eliminate the need for Data Analysis: design retrieval + summarization flows that work from smaller pasted excerpts or a single document chunk at a time.

- Reduce upload churn: structure the GPT to succeed with one “source pack” upload and avoid iterative re-uploads.

- Provide an external workflow: if the Python step is essential, publish it as a separate script/notebook or lightweight web app, and have the GPT focus on interpretation and writing (not execution).

Wondering what Google Gemini’s approach is? Let’s take a look.

Gemini

Here’s what Gemini had to say about it:

Gemini’s approach to this is quite similar to OpenAI’s, but it leans even more heavily on its ecosystem integration and massive context window as the “paywall” differentiators.

If you are using Gems (Gemini’s version of Custom GPTs), here is why the free experience feels limited compared to Gemini Advanced:

1. The Context Window “Paywall”

Gemini’s biggest selling point is its context window, and Google uses this as a primary lever for subscriptions.

- Free Users: You are generally limited to a much smaller context window (around $32\text{K}$ tokens). If you upload a $100$-page PDF, the model literally cannot “see” the whole thing at once. It has to summarize or “forget” the beginning to read the end.

- Advanced Users: You get the $1$ million+ token window. This allows the Gem to hold thousands of pages or massive CSVs in its active “thought process” without needing to truncate data.

2. Python “Code Execution” Limits

Like ChatGPT, Gemini uses a Python sandbox to do heavy lifting (calculating math in a CSV, plotting a chart from a PDF).

- Compute Intensity: Running a Python environment for every free user is incredibly expensive. Google limits free users to the Gemini Flash model for these tasks, which is faster but less “intelligent” at debugging complex code.

- The “Pro” Advantage: Gemini Advanced uses Gemini 2.5 Pro (or Ultra), which is significantly better at writing the Python code required to query your “Knowledge Bank.” Free users often see the model try to write code, fail, and then give up or provide a generic text-based answer.

3. File & Logic Throttling

In 2026, Google has implemented “rolling limits” for file analysis:

- Free Tier: You might be able to upload a few files, but once you perform a complex analysis (e.g., “Compare the trends in these three CSVs”), you hit a “cooldown” period.

- Data Priority: Gemini Advanced users get priority access to the “Code Execution” tool, meaning their scripts run on faster, dedicated hardware, whereas free users may experience “Model is busy” or “Can’t process this file right now” errors during peak times.

Summary Comparison (Gemini 2026)

Feature Gemini Free Gemini Advanced ($20/mo) Model 2.5 Flash (Lightweight) 2.5 Pro / 3 Pro (Heavyweight) Context Window ~$32\text{K}$ tokens (~50 pages) $1$M+ tokens (~1,500 pages) Gems Access Can create/use (Limited) Full creation & file reference Python Execution Basic / Rate-limited Advanced / Priority Workspace Integration Limited Direct access to Drive/Gmail

The “NotebookLM” Loophole

Interestingly, Google has a separate product called NotebookLM that is currently free. It uses the same Gemini Pro “Knowledge Bank” logic but is optimized specifically for documents. If you find Gemini’s free Gems too restrictive for your PDFs, NotebookLM often provides the “Plus-level” experience for document analysis without the $20$ monthly fee—though it lacks the custom persona and “agent” capabilities of a Gem.

BoodleBox Unlimited

Here’s BoodleBot’s response:

Great question! The disparity comes down to computational resources and infrastructure costs. Here’s why:

Resource-Intensive Processing

When custom bots interact with PDFs, CSVs, and other files from the Knowledge Bank, they often need to:

- Execute code automatically – BoodleBox’s code interpreter runs Python in real-time to analyze data, perform calculations, create visualizations, and transform information from your files

- Process large context windows – PDFs and complex documents require significant processing power to parse, understand, and reference accurately

- Use advanced AI models – These tasks typically require frontier models (like GPT-4o, Claude 3.5 Sonnet, or Gemini Pro) rather than lighter models, because they need sophisticated reasoning to interpret file contents and generate meaningful responses

The Economics of AI

Each of these operations has real costs:

- Compute power for running Python code in sandboxed environments

- API costs for accessing premium AI models that can handle complex file analysis

- Storage and processing for maintaining and quickly accessing Knowledge Bank files

- Infrastructure to ensure fast, reliable performance across all users

Free tier users get 5 “Pro” messages daily specifically to sample these capabilities, but unlimited access to these resource-intensive features would make the platform financially unsustainable without the revenue from Pro/Unlimited subscriptions.

The Trade-off

Free users can still:

- Access unlimited messages with lighter models (GPT-4o-mini, Gemini 1.0)

- Upload files to the Knowledge Bank

- Use thousands of existing BoodleBots

- Attach knowledge to chats (though processing depth may be limited)

But building custom bots and getting unlimited access to the heavy computational lifting required for sophisticated file analysis is reserved for paid tiers to keep the platform viable for everyone.

Discover more from Another Think Coming

Subscribe to get the latest posts sent to your email.

[…] I was also inspired to finally sum up this information, Free AI Comparison: Gemini Rules #BoodleBox The Winner. […]