Welcome to Part One of this series. The link to Part Two appears at the very bottom.

After setting up an old laptop (Dell Alienware M15) with MX Linux, I decided to investigate the idea of setting up a knowledge wiki for Claude to access. I have to admit that working on MX Linux (KDE Plasma X11) the last two weeks since admitting defeat with ZorinLinux and PopOS! NVIDIA support has been a breeze. In fact, this was my first real venture with KDE Plasma, too. I usually prefer something a little less than “everything AND the kitchen sink.” I had been missing out.

While I found ZorinLinux to work great on another old machine I had access to, I found that on the Alienware M15, my machine kept freezing anywhere 15-20 minutes in. I must have tried dozens of fixes, none of which dealt with the issue. PopOS! (with its NVIDIA support) was going to do the trick, or so I thought, but then, it all went to heck with COSMIC. I’m still not sure what caused the problem (probably me trying to get the right software I needed for work to work).

Out of desperation, since I had tried MXLinux (e.g. ANTI-X, too) some years before, I gave it another go. What a beautiful, easy experience. As you can see of the screenshot below, I’ve got a million and one tools loaded, all of them working perfectly with my NVDIA GE Force card…that includes Whisper in the guise of the Buzz app for audio/video transcription, OpenShot, Shutter for screen capture, Signal, Mullvad VPN/Browser, Signal, Zoom, and other must haves. After getting everything set up, it was time to try Claude Code again.

Claude Code

This will be my second time with Claude Code. It had worked quite well before, so I decided that I needed to give it another go. And, I was dying to try running Claude Code with a “knowledge wiki.” I had gotten the idea from a post on Threads:

Most people’s experience with LLMs and documents looks like RAG: you upload a collection of files, the LLM retrieves relevant chunks at query time, and generates an answer. This works, but the LLM is rediscovering knowledge from scratch on every question. There’s no accumulation. Ask a subtle question that requires synthesizing five documents, and the LLM has to find and piece together the relevant fragments every time. Nothing is built up. NotebookLM, ChatGPT file uploads, and most RAG systems work this way.

The idea here is different. Instead of just retrieving from raw documents at query time, the LLM incrementally builds and maintains a persistent wiki — a structured, interlinked collection of markdown files that sits between you and the raw sources. When you add a new source, the LLM doesn’t just index it for later retrieval. It reads it, extracts the key information, and integrates it into the existing wiki — updating entity pages, revising topic summaries, noting where new data contradicts old claims, strengthening or challenging the evolving synthesis. The knowledge is compiled once and then kept current, not re-derived on every query.

This is the key difference: the wiki is a persistent, compounding artifact.

I’d stumbled across the instructions (and lost them) a few weeks ago, but I decided to try my luck asking Claude how to get started.

Installing Claude Code

With Claude’s assistance, tt gave me a few commands (nothing special, just ask Claude) and I had Claude Code installed:

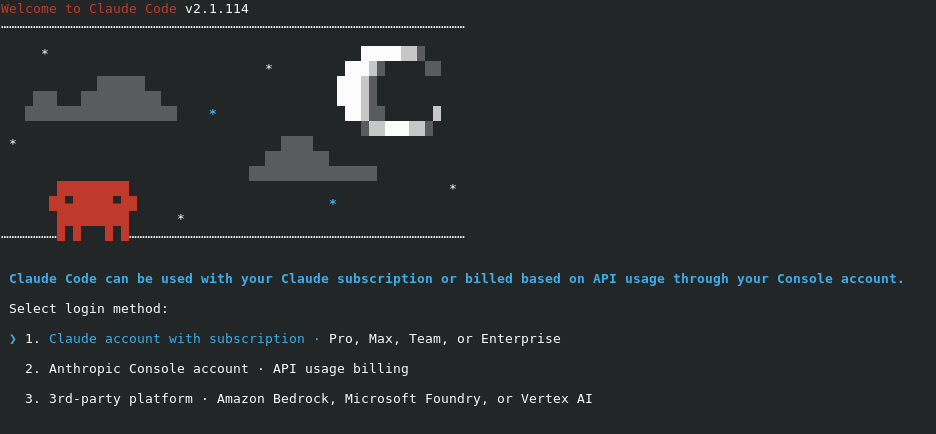

After choosing Option #6 – Dark Mode with ANSI Colors only, I had to pick how to use it:

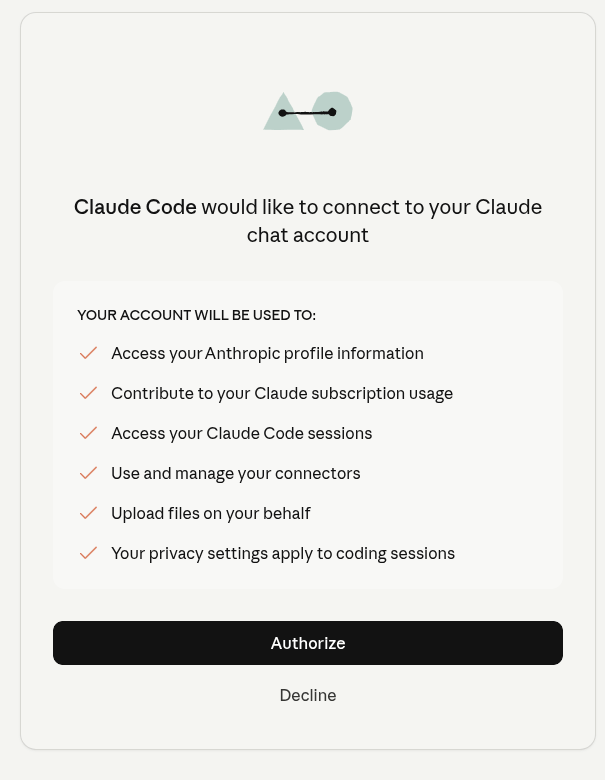

This prompted browser authorization:

Pretty easy, no?

My next step was to switch to the folder I wanted to Claude to have access to. I created a folder called “wiki” (and to which I exported all my markdown files on AI stuff, as well as dropped in my “Knowledge stack” for all my Gen AI projects.

Since I have everything backed up, I’m not worried about what Claude might do to the contents…in fact, I want it to do SOMETHING to organize it. I’m hoping that it can do what browser-based Claude did (which was to create this mini-portfolio of stuff).

Committing My Wiki Folder to Claude’s Care

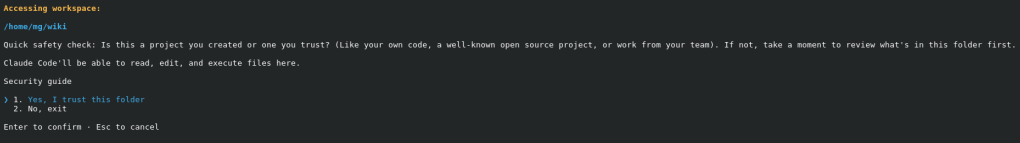

The next step was to set up the wiki folder and allow Claude access. I simply switched to my wiki folder at (cd ~/wiki) and then launched claude with > claude and there we go.

Installing Obsidian to Interact with Markdown Locally

Although I suppose this step wasn’t strictly necessary, I thought I’d give Obsidian a go. The idea behind installing Obsidian was so I could work with the markdown files in the folder (I put everything in Markdown since I save it in Joplin Notes). I could just have easily used a text editor and a directory tools like Krusader (since I’m on MX Linux Plasma X11) or MidnightCommander (MC).

Joplin Notes encrypts everything, but also allows you to export the contents as markdown/text files, which I did and put into my ~/wiki folder. I used Obsidian to interact with the contents, and my goal is to use it to organize content in the wiki folder…or really, to get Claude Code to organize it in a way that makes sense.

Problems Ahead?

Of course, if you read the comments related to llm-wiki website, you will see some critiques. Still, I like the idea of wikifying the intelligence files (the knowledge stack) and having Claude sort through the contents, then organize it. Will that work? I’m not sure. That’s part of the fun.

The question I have going around in my head is:

I want Claude to organize the contents of this folder, and create a set of “intelligence” files that replace all the old contents with a “master index” or menu of contents at the top level. Is that possible?

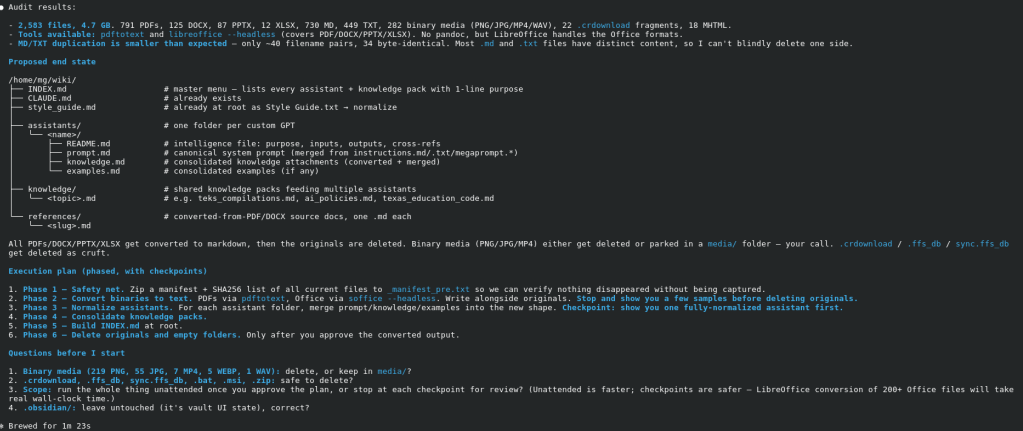

Claude Code’s response?

❯ I want Claude to organize the contents of this folder, and create a set of “intelligence” files that replace all the old contents with a “master index” or menu of contents at the top level. Is that possible?

● Yes, possible — but I want to confirm the “replace” part before doing anything. Two interpretations:

1. Summarize-and-index (non-destructive): I generate top-level intelligence files (e.g. INDEX.md, plus one per category like _Assistants.md, _KnowledgePacks.md, _Policies.md) that describe every folder —

purpose, key files, cross-links. Original folders stay intact. Recommended — the PDFs, DOCX, and prompts are source material you can’t regenerate.

2. Literal replacement: delete the 80+ folders after summarizing. I’d push back on this; the summaries would lose the actual prompt text, knowledge attachments, and reference PDFs that the assistants depend on.

Main tradeoff on option 1: the indexes take some time to build well (I’d need to skim each folder’s main prompt/readme), and they drift as content changes unless regenerated.

Which do you want — and if option 1, do you want one flat INDEX.md or a tiered set (master + per-category)?

What follows next are a series of questions where Claude confirms changes with me (I chose option 2: literal replacement).

It’s amazing how much stuff I have thrown into a folder and/or Joplin Notes regarding Generative AI. Making sense of it would probably involve me deleting content and losing access to stuff I’d curated. I’m essentially giving Claude complete control over the process of organizing my Gen AI files. I might still lose access to something or forget what I’ve lost, but there’s also the real possibility that this process will “surface” content I’d forgotten about and make it easier to access in the future.

Even better, going forward, I’ll be able to add new content and ask Claude to organize it. That’s the plan. Part of this journey is finding out how much I don’t know or understand about using Claude Code in this way. Again, I am prepared for a total disaster.

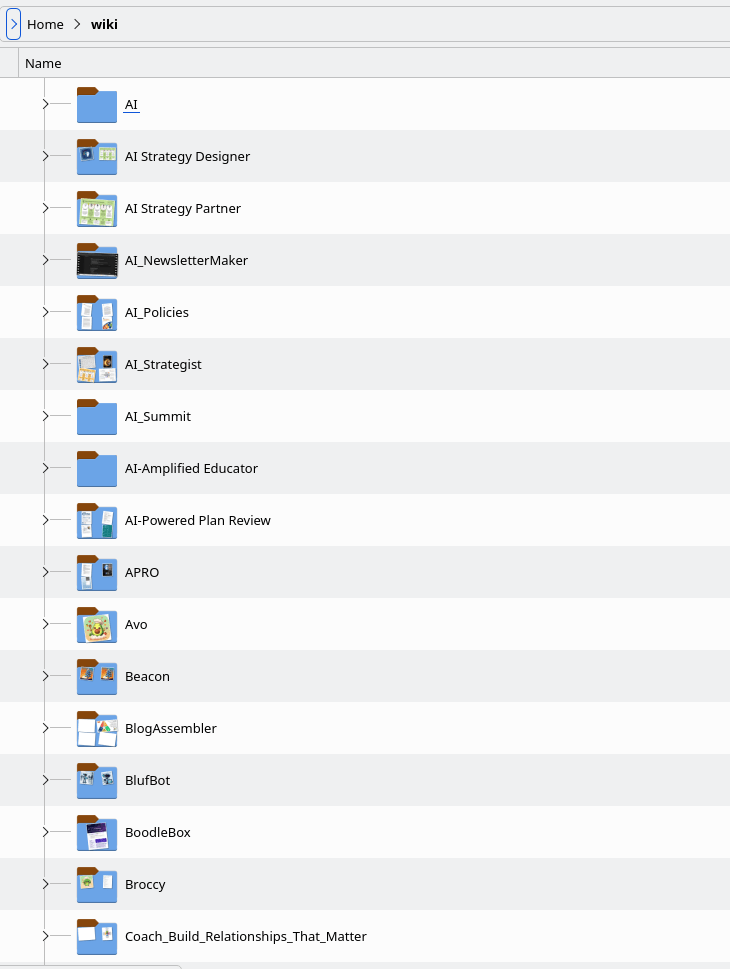

I will be sure to let you know how it goes. In the meantime, here’s a portion of what the folder structure looks like:

I’m curious to see what it looks like AFTER Claude is done.

Discover more from Another Think Coming

Subscribe to get the latest posts sent to your email.

[…] Welcome to Part Two of this blog series. Read Part One. […]